The COVID-19 pandemic is impacting us in many ways. This has literally put our world to a standstill. However, in order to prevent the community spread, the need for social distancing is absolutely necessary and important. In this project, we are trying to solve the problem of crowd gathering which will make a favorable condition for the community spread of the pandemic. Referring to the highly successful #KeralaModel for Covid19, social distancing & containment were one of the major factors that helped to flatten the curve.

Our motivation comes from the aim that Micron plans to achieve. Referring to the contest description, as the first wave of COVID-19 impacted every population across the globe, we learned many new things pertaining to transmission and prevention. We are better equipped to address the continued pandemic and need sustainable low-cost solutions that can provide safe, preventive, effective ways to protect people in workplaces, shopping areas, healthcare facilities, etc. The UV light technology is one way to reduce exposure to surfaces that may have viruses or bacteria, and also does not require human interaction for the sanitation of those surfaces.

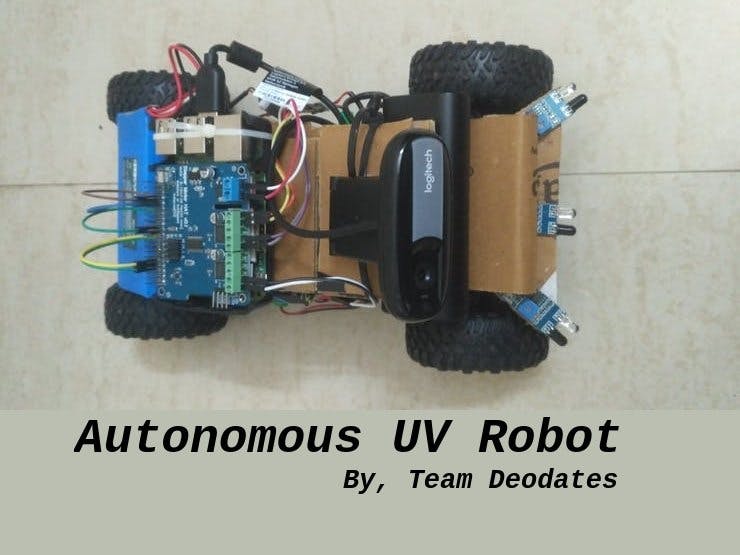

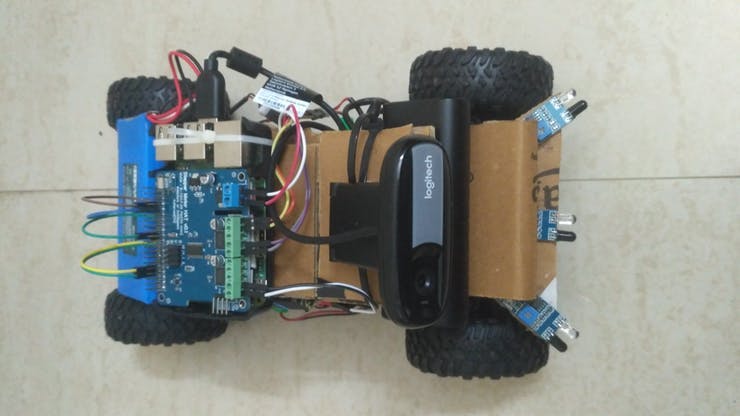

WorkflowOur project aims to develop an autonomous robot capable of autonomously or semi-autonomously traverse a room, avoid any obstacles, and disinfect it. The robot is a terrain miniature vehicle capable of traversing either manually or autonomously. This vehicle can be any typical RC ground vehicle, preferably with a brushed DC motor, controlled by an ESC. The best example of this type is a Donkey Car. The advantage of donkey cars is that it comes with autonomous capability via Raspberry Pi 3 and this is achieved by training the car. For setting up the car, training, and producing a trained model, please refer to this exclusive official guide here.

Hardware implementation

Two prototypes where developed. The first prototype used a Rock Crawler RC Car kit available here and the second prototype used a Donkey Car Kit (HSP 94186 Brushed RC Car) available here.

The PCA9685 PWM driver that comes with the donkey car kit is capable enough to drive all the servos and motors that come with it. But in case if you plan to attach more sensors or actuators, I would suggest you use a Raspberry Shield like this which we have used, available here.

U-Blox Neo-6M GPS (link) is attached which gives the local position of the car. The interfacing of GPS with Raspberry Pi 3 is available in this documentation. GPS and compass based autonomous navigation will be later integrated.

We attached a couple of more sensors like HD camera for image processing and IR, SONAR for obstacle avoidance. Driving multiple SONAR is tedious than IR. Therefore IR is recommended in the short run.

A separate power supply unit must be provided for Raspberry Pi and the motor assembly. This is to avoid the motor noise getting into the power supply, which may cause instability. Still, both should have a common ground.

The ground vehicle is also equipped with optional solar panel cells and an automatic solar battery charging circuit for seamless and extended periods of operation, enabling recharging on-the-go.

Software implementation

A python program is developed to give custom commands to the ground vehicle based on autonomous or semi-autonomous conditions. This program is run on the onboard raspberry pi and controls the vehicle using the motor drivers.

It also has the provision to control a pan-tilt servo system where the camera can be mounted.

Manual control of the robot is implemented using a custom-developed mobile application. It also provides live camera feed from the on-board HD camera. More details can be obtained from the coding section. The app allows manual and automatic operation of the ground vehicle. Manual control is performed via a virtual joystick implemented in the app and automatic operation is using obstacle avoidance by the manipulation of various onboard sensors via the python program.

Collision avoidance using stereo vision [Work in Progress]

A custom stereo camera was made from two Microsoft HD webcams and was calibrated.

Disparity mapping was carried out to formulate the depth from the scene and thus detect obstacles.

Collision avoidance using IR sensors

3 IR sensors were mounted on the robot to allow for obstacle detection and collision avoidance. The data was wrapped into a ROS message for easy analysis.

Evasion algorithm

The evasion algorithm is carried out in the ROS environment. Here appropriate driving commands are passed for evading the obstacle. Proper plugins can be used here so that it is extendible to any autonomous vehicle.

UV disinfection

An appropriate UV disinfection module can be mounted on the robot and can be used to disinfect an entire room without any human intervention.

ConclusionThe robot can be modified further in the future to accommodate various other modules. Since the framework is based on ROS, the integration will be easier.

_3u05Tpwasz.png?auto=compress%2Cformat&w=40&h=40&fit=fillmax&bg=fff&dpr=2)

Comments