The pothole is an important and serious issue in India, as per statistics 30% of people die due to potholes or a sequence of events generated by a pothole. These potholes are formed by the force of water and abrasion. It is a road surface that has cracked, eroded, and eventually form a pothole. This can be a major issue under Road Safety for researchers in Road Safety and Traffic Control.

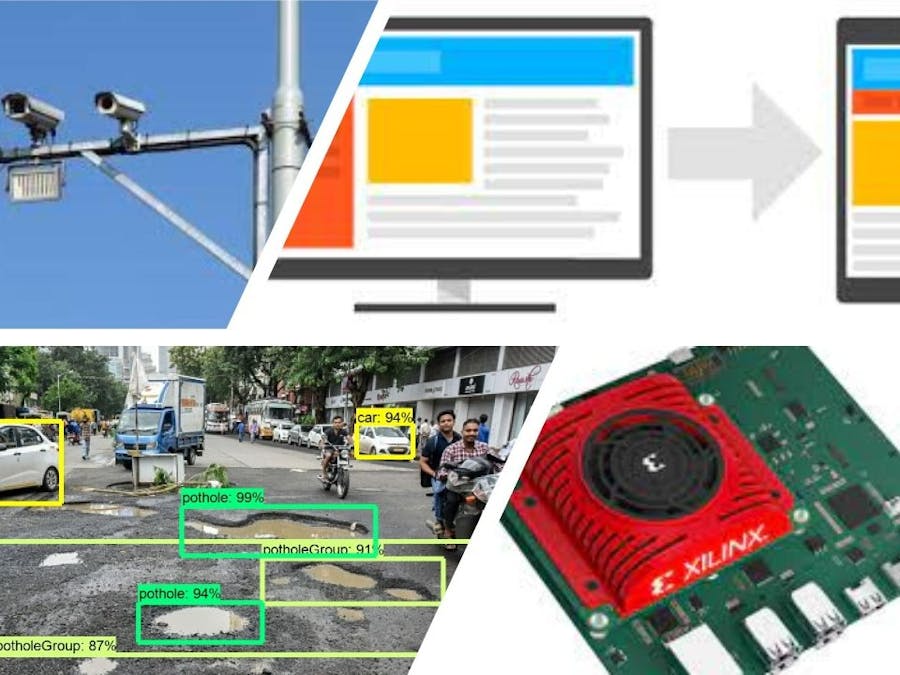

The author has an idea to create a system which able to detect pothole with location coordinates and transfer to website or concerned authorities for the repairing/maintenance purpose. The Kria KV260 module will be attached with the Camera and Kria KV260 module is running with Pothole detection ML Models. From the camera input through image/video Kria KV260 will identify the potholes in the image/video and transfer the appropriate information to the website/authority.

Time to time improvement of roads will lead to a reduction in maintenance and prevent damage in vehicles. Potholes make the car less efficient because it pushes gears to lower shift and which consumes lots of fuel. These potholes can damage to Tire, Wheels/Rims, Suspension, and Exhaust.

Solution behind the scenes: A Kria KV260 is connected with a camera and via USB/IAS images/videos will be captured. ML Model will be pre-trained on custom pothole images data set and will be running on Board. Board will identify the pothole and upload images on the website with some relevant information like coordinates and the amount of damage on the road. The model will be trained on Google Coalb/AWS which gives coefficient values and it will be run on End/Edge Device KV260. An author has used opencv and pandas libraries and TensorFlow Framework for completing the ML model task. The stored/resulted images from Board which is stored on the database will be helpful to train the model continuously for better accuracy.Block Diagram:

- Train ML Model : This step starts with Identifying the libraries and framework to be used for purpose, as per proposed idea author has chosen pillow, lxml, Cython, contextlib2, jupyter, matplotlib, pandas, opencv-python. After that install this libraries to machine/ include in scripts to auto-download on platform.

run this command after creating text file with name of "requirement.txt"

$ pipreqs // to create requirement file

$ pip install -r requirements.txt // to run command fileThen after comes to writing code for Capturing Images, Training Model, Testing Model. This step generally known to all student/friends/colleagues. So author has written the code and tested it with pre-captured images as shown in figure (2) & (3), which is working fine as expected.

To optimize this model as per hardware limitations, power, latency etc., will be discussed in Research Section of this submission.

- Run ML Model as live-stream : We have written the model and tested with data which can be live, pre-captured, custom. Now we come to run this model as live-stream on platform which means user/author do not have to run it every-time by invoking python script, so generally we are writing as

$ python -u myscript.py >myscript.py.log 2>&1Another task would be keep camera occupied with our code, as this is not advisable because for dedicated hardware/camera it is going to be handled by port address and mapping to its code/also called as Firmware.

- Script for data-transfer to server : As per proposed idea potholes are detected by system and location coordinates are with system as pre-configuration, so point come to upload this data somewhere in cloud and by using this data inform authority/contractor to repair the same.

For this task author has written powershell script to fetch the detected data to be passed on to cloud via parameters, For example

p = subprocess.Popen(['powershell.exe', './Alert.ps1' + location + num_detections], stdout=sys.stdout)

print("Alert response is" + p)So here location and num_detections are values coming from our python code and this is passed in powershell script to be upload on server/cloud.

So may be some of friends has question about powershell, it is basically functionality offered by linux system to manage/handle platform, authomation, configuration and it doesn't consume extra resource or no extra libraries to be included for this purpose. The written file by author is also attached in attachment section.

- Create Linux Service for Running Project/Experiment : Now we come to last section of this project to be run this whole system as linux service, so no other task can easily interrupt and stop our code to be run on Kria Platform. As extra functionality Author has added this but without this also project can be run on platform.

This file will be stored as "run.sh"

#!/bin/sh

# run experiments

python -u myscript1.py >myscript1.py.log 2>&1 // Multiple Task can be added

python -u myscript2.py >myscript2.py.log 2>&1 //Assuming the your code files, scripts are in stored in experiments, then by using this command our custom service will be up and running in background.

$ nohup /home/samyak/experiments/run.sh > /home/samyak/experiments/run.sh.log </dev/null 2>&1 &Here our proposed project is completed and now Auhor is presenting his research for this project and some future improvement which is being carried out by author as add-on in this project after this competition.

Research: While choosing libraries and framework for our project, many of us are confused when it comes to run model on Embedded Hardware/ so called Edge Device in new booming terminology. Their are so many Architectures, Techniques and Frameworks are available in market for same task/purpose.

As per author's experience all libraries are similar footprints and resources requirements in terms for space, so instead for that we can focused on "Framework selection" which gives us limitations like large frameworks and its rich runtime. For this limitations the TF Lite/TF Lite Micro will be correct selection.

For Training model we can opt the "off loading data processing, pruning, data curation" to optimize at code level and "CMSIS-NN" for compiler/low-level optimizations. This techniques can help us to reduce running inference latency, power and bandwidth.

To overcome the Hardware limitations like limited memory, processing power and energy cap. we can work on noise-to-signal ratio which means irrelevant data can cause network congestion & data storage problems without adding value.

Scope of Future Improvement: Author wants to carry on this project with TF Lite Micro custom library for KV260 and NSR techniques to be implemented.

Comments

Please log in or sign up to comment.