Overview

The pandemic has introduced a constraint to social interaction: distance. Considering this factor of risk, countries all over the world have been in varying levels of quarantine, and many malls have had to close down due to significantly lowered consumer count. This has led to a very, very high level of layoffs of mall personnel, as well as similar economic challenges for business owners.

This has caused relative low-income (less than $40,000 in annual earnings) job loss levels as of July 2, 2020 in the US due to COVID-19. Accommodation and Food Services, as well as Retail Trade and Entertainment collectively count for ~4,000,000 of the estimated jobs lost.

The economic challenge faced by different industries, where Food, Consumer, and Retail come up in the Top 6 industries with the highest number of employees laid off (amounting to estimated 20,000+ jobs). Assuming “International” to include for all non-US areas taken into account by this research, the total global layoff count reaches an estimated 103,000+ jobs.

Considering the given data, the team has determined a major challenge to be that there is risk uncertainty, with regard to population density in different shops, for the malls that are still open. Aside from this, while wearing face masks and avoiding touch is mandatory in many places, there are still violations. This makes it more difficult for those mall personnel and business owners, who cannot afford to work remotely, to safely navigate this new normal workspace. This begs the question: how can we guarantee to a decent certainty level that, at any point in time, a particular shop in the mall is safe to enter?

We all are now fighting against the prevailing COVID-19 pandemic. And also, now we are in a situation where we have to adapt to the prevailing conditions with more safety measures. While life coming back to normal with more safety measures to avoid virus infection, adding safety within the public places and crowded areas are also prevailing in the cities. But there were many situations where we have to break the safety measures and interact with an unsafe element to meet the needy. Here, the project is dealing with the prevention of COVID-19 spread though touch interactions or touches.

An experiment had been conducted to see the spread of any Virus through touch. The results were evaluated as follows:

Hence I decided to automate the most commonly used devices in housing and societies to ensure hands-free communication with devices.

The following solutions were developed in making this prototype

- Smart Intercom System using TinyML deployed on Arduino33 BLE Sense: The following will be a touch-free solution using Computer Vision and a TinyML model to detect a person outside the door and conduct a bell ring without the person touching the bell.

- TemperatureMonitoring system Using IoT and alert system : Amidst the pandemic, safety has become an important aspect. Hence, the Temperature Monitoring system utilizes IoT Thingspeak dashboard and detects people while entering and measures their temperature. This temperature is displayed to an IoT Dashboard for timely trends and data analysis. Upon abnormal temperature detection, alerts are generated upon which the person undergoes second inspection.

- Touch-Free elevator system using Speech Recognition TinyML model on Arduino BLE 33 sense: We use lifts to go up or down in a building several times a day, and I always have a fear of touching contaminated switches which have been touched by other people commuting. Hence this speech recognition model will identify when a person wishes to go up or down and similarly will perform the action.

- MaskModel Detection System based on TinyML and IoT monitoring system : This method will use a computer vision model deployed on Arduino BLE 33 sense to detect whether a person has worn a mask or not and similarly this data is sent to an IoT Dashboard for monitoring and imposing restrictions according to unsafe times.

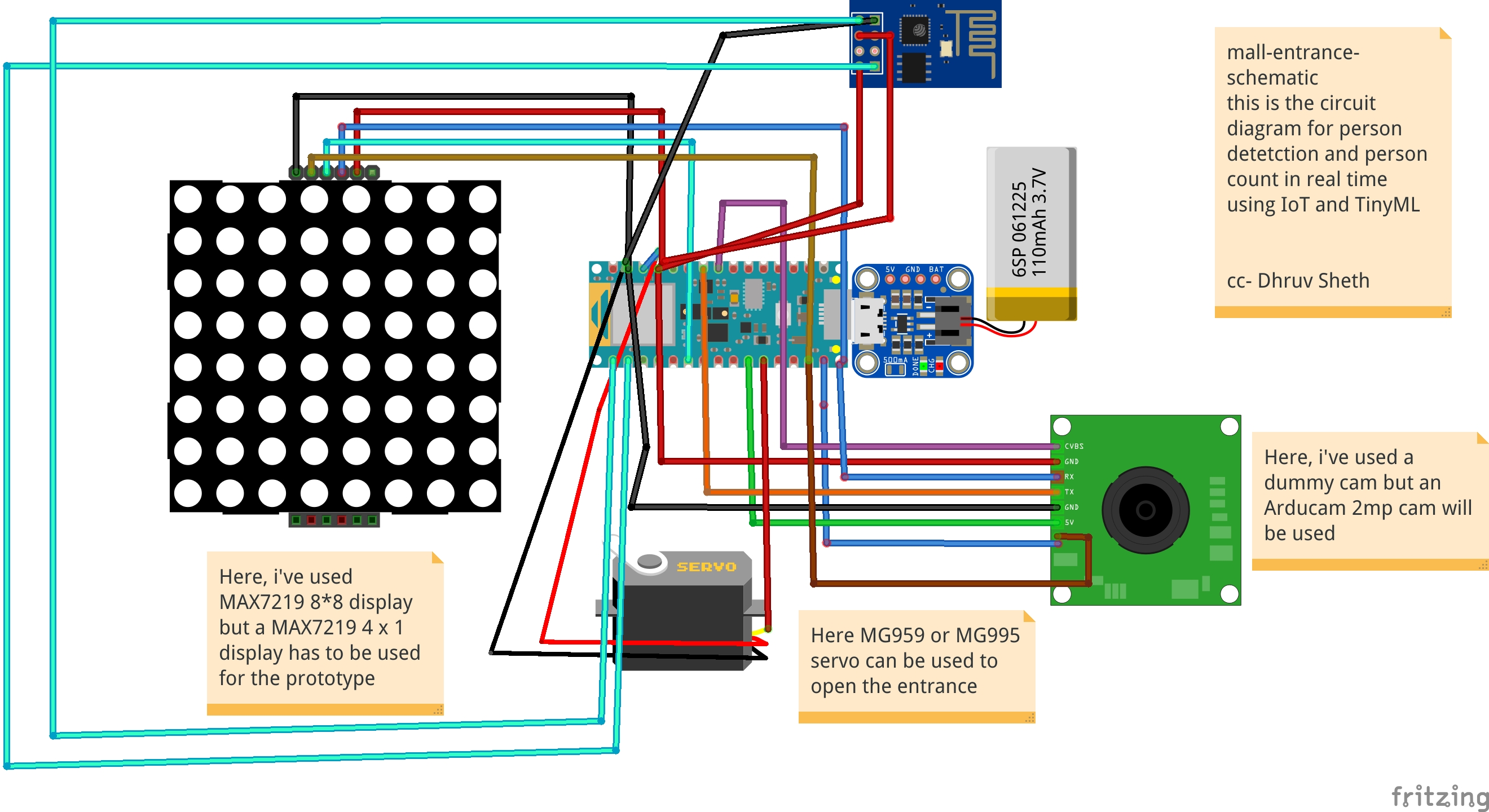

- Smart Queue monitoring and establishing system in a supermarket or a mall using TinyML, IoT and computer vision: This model will detect a person standing outside the supermarket and allow the entry of 50 people at a time in the supermarket. It will wait for another 15minutes to let the people inside complete their shopping and allow the next set of 50people into the mall again. This will be done using computer vision and TinyML deployed on an Arduino 33 BLE sense. This data will then be projected to an IoT Dashboard where real time data can be tracked.

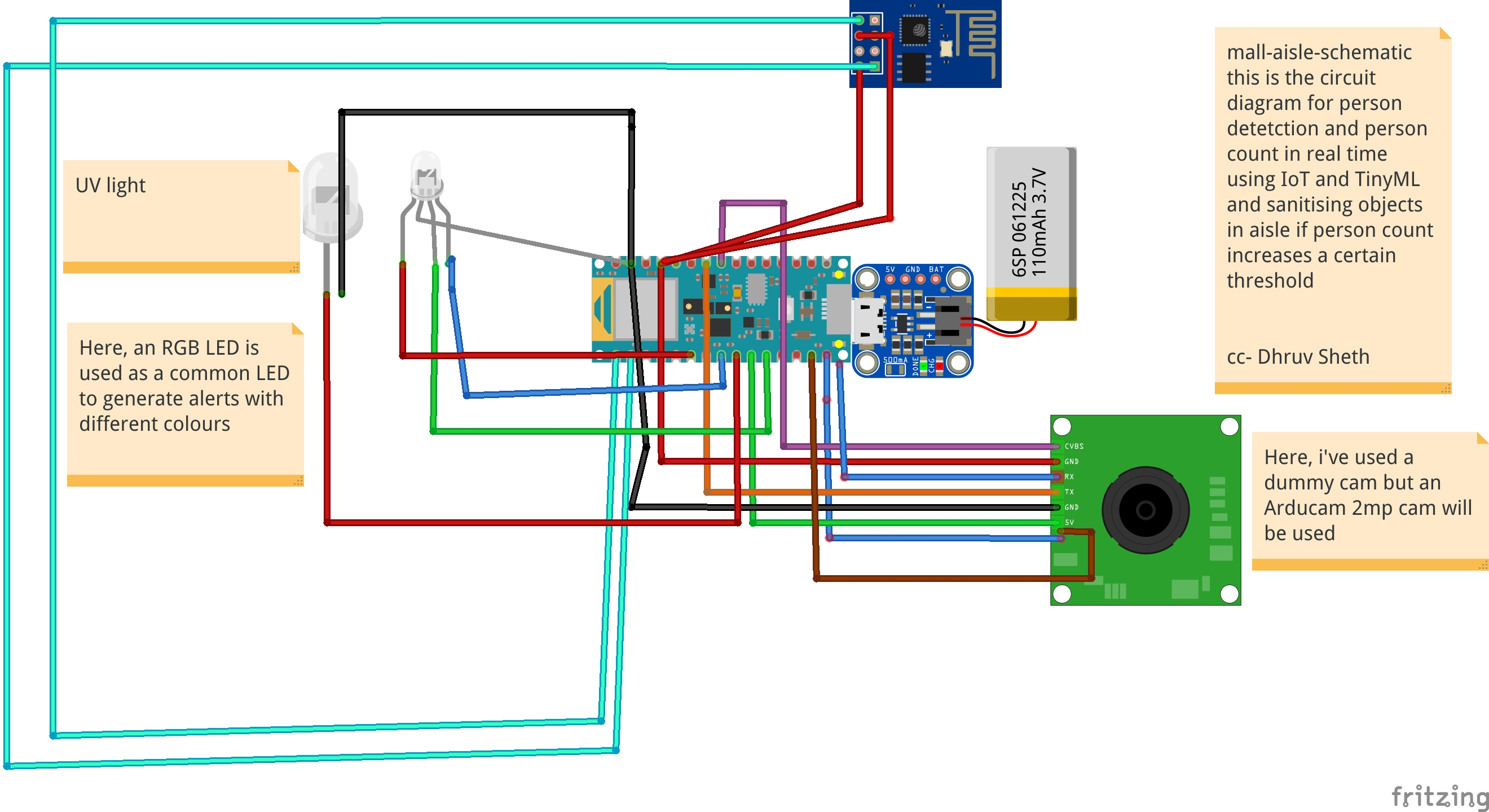

- Person Monitoring System in an Aisle in a Mall and contamination based Sanitization System: This solution Uses Person detection Algorithm deployed in an area in a Mall or a supermarket and if the person contamination in an area has passed the threshold, It self sanitizes the area with UV light. The Sanitization period and times is projected on an IoT Dashboard for the supermarket staff for analysis.

Hardware Required:

1)Arduino33BLE sense

The Arduino Nano 33 BLE Sense is an evolution of the traditional Arduino Nano, but featuring a lot more powerful processor, the nRF52840 from Nordic Semiconductors, a 32-bit ARM® Cortex™-M4 CPU running at 64 MHz. This will allow you to make larger programs than with the Arduino Uno (it has 1MB of program memory, 32 times bigger), and with a lot more variables (the RAM is 128 times bigger). The main processor includes other amazing features like Bluetooth® pairing via NFC and ultra low power consumption modes.

Embedded Artificial Intelligence

The main feature of this board, besides the impressive selection of sensors, is the possibility of running Edge Computing applications (AI) on it using TinyML. You can create your machine learning models using TensorFlow™ Lite and upload them to your board using the Arduino IDE.

2)ESP8266 ESP-01

The ESP8266 ESP-01 is a Wi-Fi module that allows microcontrollers access to a Wi-Fi network. This module is a self-contained SOC (System On a Chip) that doesn’t necessarily need a microcontroller to manipulate inputs and outputs as you would normally do with an Arduino, for example, because the ESP-01 acts as a small computer. Depending on the version of the ESP8266, it is possible to have up to 9 GPIOs (General Purpose Input Output). Thus, we can give a microcontroller internet access like the Wi-Fi shield does to the Arduino, or we can simply program the ESP8266 to not only have access to a Wi-Fi network, but to act as a microcontroller as well. This makes the ESP8266 very versatile.

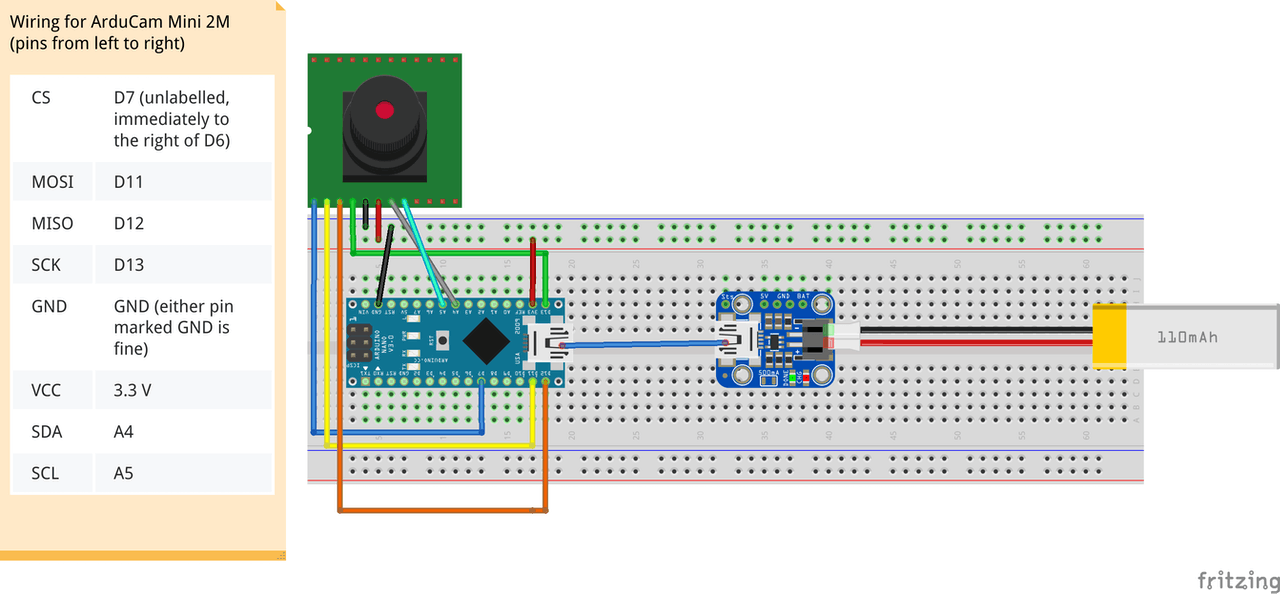

3)Arducam Mini 2MP plus

ArduCAM-2MP-Plus is an optimized version of ArduCAM shield Rev.C, and is a high definition 2MP SPI camera, which reduces the complexity of the camera control interface. It integrates 2MP CMOS image sensor OV2640, and provides miniature size, as well as the easy to use hardware interface and open source code library.

The ArduCAM mini can be used in any platforms like Arduino, Raspberry Pi, Maple, Chipkit, Beaglebone black, as long as they have SPI and I2C interface and can be well mated with standard Arduino boards. ArduCAM mini not only offers the capability to add a camera interface which doesn’t have in some low cost microcontrollers, but also provides the capability to add multiple cameras to a single microcontroller.

4) Arduino MKR WiFi 1010:

The Arduino MKR WiFi 1010 is the easiest point of entry to basic IoT and pico-network application design. Whether you are looking at building a sensor network connected to your office or home router, or if you want to create a BLE device sending data to a cellphone, the MKR WiFi 1010 is your one-stop-solution for many of the basic IoT application scenarios.

The board's main processor is a low power Arm® Cortex®-M0 32-bit SAMD21, like in the other boards within the Arduino MKR family. The WiFi and Bluetooth® connectivity is performed with a module from u-blox, the NINA-W10, a low power chipset operating in the 2.4GHz range. On top of those, secure communication is ensured through the Microchip® ECC508 crypto chip. Besides that, you can find a battery charger, and a directionable RGB LED on-board.

Software Tools:

1)Arduino Web Editor

Arduino Create is an integrated online platform that enables Makers and Professional Developers to write code, access content, configure boards, and share projects. Go from an idea to finished IoT project quicker than ever before. With Arduino Create you can use an online IDE, connect multiple devices with the Arduino IoT Cloud, browse a collection of projects on Arduino Project Hub, and connect remotely to your boards with Arduino Device Manager. As well you can share your creations, along with step-by-step guides, schematics, references, and receive feedback from others.

2)Edge Impulse Studio:

The trend to run ML on microcontrollers is sometimes called Embedded ML or Tiny ML. TinyML has the potential to create small devices that can make smart decisions without needing to send data to the cloud — great from an efficiency and privacy perspective. Even powerful deep learning models (based on artificial neural networks) are now reaching microcontrollers. Over the past year great strides were made in making deep learning models smaller, faster and runnable on embedded hardware through projects like TensorFlow Lite for Microcontrollers, uTensor and Arm’s CMSIS-NN; but building a quality dataset, extracting the right features, training and deploying these models is can still be complicated.

Using Edge Impulse you can now quickly collect real-world sensor data, train ML models on this data in the cloud, and then deploy the model back to your Arduino device. From there you can integrate the model into your Arduino sketches with a single function call. Your sensors are then a whole lot smarter, being able to make sense of complex events in the real world. The built-in examples allow you to collect data from the accelerometer and the microphone, but it’s easy to integrate other sensors with a few lines of code.

3) Thingspeak:

ThingSpeak™ is an IoT analytics service that allows you to aggregate, visualize, and analyze live data streams in the cloud. ThingSpeak provides instant visualizations of data posted by your devices to ThingSpeak. With the ability to execute MATLAB® code in ThingSpeak, you can perform online analysis and process data as it comes in. ThingSpeak is often used for prototyping and proof-of-concept IoT systems that require analytics.

You can send data from any internet-connected device directly to ThingSpeak using a Rest API or MQTT. In addition, cloud-to-cloud integrations with The Things Network, Senet, the Libelium Meshlium gateway, and Particle.io enable sensor data to reach ThingSpeak over LoRaWAN® and 4G/3G cellular connections.

Getting started with Implementations:1st Project : Touch-Free elevator system using Speech Recognition TinyML model on Arduino BLE 33 sense:While commuting, we use elevators several times a day! A commonly used switch is contaminated by all those who have already touched the lift earlier. So, I decided to create a touch-free solution for elevators which uses voice commands and without the use of IoT or Wifi network, it collaborates and performs the action. Solutions of Touch-free elevator systems have been implemented which use gesture control or ultrasonic sensors to carry out operations but the problem faced in these sensors are that they need to be activated from a closer distance and hence, the risk of touch is increased. Also, these sensors are highly sensitive and are activated even if small objects come in the way and activate them. Accordingly, I proposed to create a more accurate solution using voice detection on the Arduino 33 BLE sense. This model takes in two commands, either "up" or "down" and accordingly sends data to the servo to push the respective buttons on the switch. The idea of sending the data to servo to activate switches is because a majority number of societies have prebuilt switch and display systems and hence not hampering these pre-existing systems, this is created to add and external hardware system to control the functions.

The core logic used to perform this function is:

Training the Model to interpret "up" and "Speech" commands on Edge Impulse Studio

a) Accumulating Raw data with Training and Testing Data Sets. Here, I've accumulated data of 1:30min with each data duration of "up" and "down" of 2sec.

b) Creating an Impulse based on the Required Parameters:

Here, I've set the window increment size to be 300ms and training based on Keras Neural Network which is dedicated for microphone and accelerometer data.

c) Conversion of Raw Data to processed Data. Just below the Raw data, we can see the raw data features and the processed data is seen as DSP results based on cepstral coefficients.

d) Training the Input Data to Generate Processed Features. I've recorded the data with low and high frequency based on the same data to train the impulse better and make the voice recognition accurate training it based on all types of voices. Here, we are getting a Feature output in a "S" curve with central differentiation on x, y and z axis.

e) Finally, Designing a Neural Network Architecture in Edge Impulse Neural Network Classifier and training the Network.

Here, the Neural Network Architecture designed for the data input is as follows:

- Input layer

- Reshape Layer

- 1D convo pool layer(30 neurons, 5 kernal)

- 1D convo pool layer(10 neurons, 5 kernal)

- Flatten layer

The model performed fairly well with an Average Accuracy of 91.2% and 0.29 loss. The model was trained on 100training cycles(epochs). The confusion matrix looks pretty clear and accurate with the most of rest data matching to their respective labelled classes.

After the model was trained, I tested the model with Test Data and live Data and the model got an accuracy of 75% based on 24seconds test data

Finally, after the model was trained and got a decent accuracy with the logic, I deployed the model as an Arduino Library and deploying it on Arduino 33 BLE sense.

After the Script was ready, I started to edit it on Arduino Web Editor for the ease of customisation and the Final script output that could be deployed on Arduino 33 BLE Sense is as follows:

You can see the main.ino file on the Arduino Web Editor platform here:

Main-script.ino - Arduino Web Editor

Main-script.ino - Github

Deploying to Arduino 33 BLE Sense

As of this writing, the only Arduino board with a built-in microphone is the Arduino Nano 33 BLE Sense, so that’s what we’ll be using for this section. If you’re using a different Arduino board and attaching your own microphone, you’ll need to implement

As of this writing, the only Arduino board with a built-in microphone is the Arduino Nano 33 BLE Sense, so that’s what we’ll be using for this section.

The Arduino Nano 33 BLE Sense also has a built-in LED, which is what we use toindicate that a word has been recognized and also control the servo to perform the function

Here's a snippet of the micro features code in the model

The logic works as follows for response to the Command:

// If we hear a command, light up the appropriate LED and turn on the appropriate servo

if (found_command[0] == 'up') {

last_command_time = current_time;

digitalWrite(LEDG, LOW); // Green for up, turning on the LED by LOW command

servo_7.write(0); // Rotating servo to 0degrees to click the button on the lift when said "up"

delay(100);

digitalWrite(LEDG, HIGH); // Switching off the LED after flashing the command

servo_7.write(180); // servo returning to its original position i.e 180degrees

}

// If we hear a command, light up the appropriate LED and turn on the appropriate servo

if (found_command[0] == 'down') {

last_command_time = current_time;

digitalWrite(LEDG, LOW); // Green for up, turning on the LED by LOW command

servo_7.write(0); // Rotating servo to 0degrees to click the button on the lift when said "down"

delay(100);

digitalWrite(LEDG, HIGH); // Switching off the LED after flashing the command

servo_7.write(180); // servo returning to its original position i.e 180degrees

}The second command response is the same like above but turns the servo when the command "down" is heard.

After training data, we get a trained tflite dataset output as follows:

Here, we are using the inbuilt microphone on the Arduino Nano 33 BLE Sense and the model uses an average of ~24-28Kb from the 256kb flash memory on the Arduino Nano board. This model is comparatively light weight as compared to Image recognition model and can process information at much higher rate.

The Logic in the Speech recognition model goes as follows:

Data is CapturedCaptures Audio Samples from MicrophoneConverts raw audio data into spectrogramsTflite Interpreter Runs the ModelUses inference output to decide whether command was heardIf the down command is heard, the servo moves pressing the down key on the lift control panel.If the up command is heard, the servo moves pressing the upward key in the lift control panel.

The above is the data in the library of the trained model. The main functions are included in the Tensorflow file.

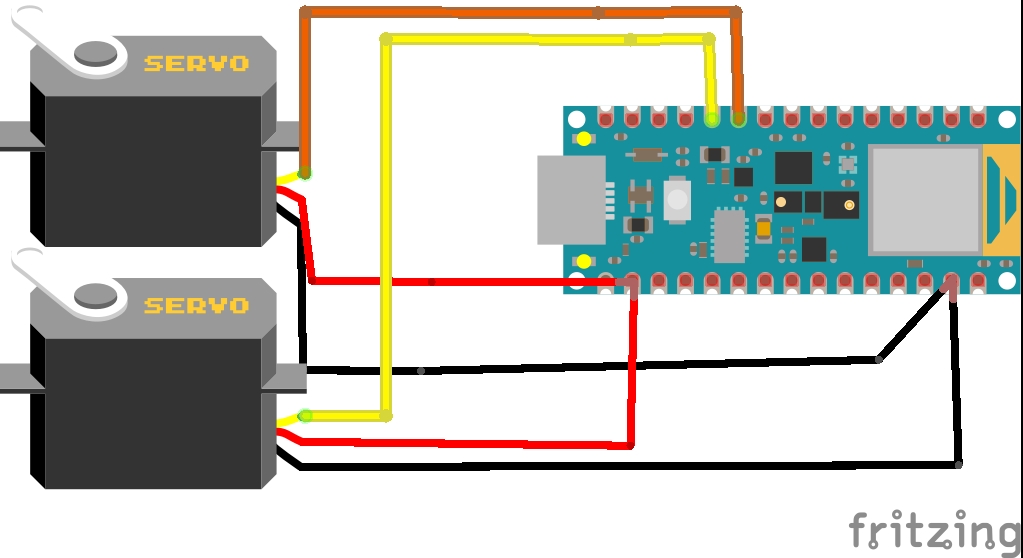

Circuit Diagram for the project:

The Arduino Nano 33 BLE Sense utilities the inbuilt microphone for collecting raw data. Since, the Inferencing on Arduino takes time, I've added a delay of 100millisecond to process the data accurately. Hence between two recording samples, an inbuilt blue led is flashed indicating the response to be heard/said between the two led flashes.

This is a simulation of the Arduino 33 BLE Sense which demonstrates the flashing of The LED between two successful Microphone input Intervals

Based on command input, the servo rotates accordingly

Now there are other alternatives to a touch-free elevator Automation system like using ultrasonic sensors or gesture sensors but both of these have their own flaws hence I decided to make the Voice controlled elevator Automation system

Flaws faced in Ultrasonic sensors: Ultrasonic Sensors are highly motion sensitive. If any object moving in the passage comes in the range of ultrasonic Sensors, these get activated. Ultrasonic Sensors aren't accurate enough too, sometimes these process wrong information.

Flaws in Gesture controlled Sensors: These sensors are more accurate than ultrasonic Sensors but they need to be activated from a closer distance. This increases the risk of touch between hands and the lift panel.

However speech controlled elevator panel is more accurate than the above two solutions and can be activated from a farther distance than the ultrasonic and Gesture sensor.

Here is a Graph of Accuracy vs Activation Distance and where these sensors stand.

This shows the distance needed to activate the gesture sensor. Since this distance is seen to be really less, the rate of contamination is high

next, we move on to the second sub-project part of the main project

2nd Project: Smart Intercom system using facial recognition and TinyML:CDC updated its site and issued a news release saying that indirect contact from a surface contaminated with the new coronavirus—known as fomite transmission—is a potential way to contract the new coronavirus.

"Based on data from lab studies on Covid-19 and what we know about similar respiratory diseases, it is possible that a person can get Covid-19 by touching a surface or object that has the virus on it and then touching their own mouth, nose, or possibly their eyes, " CDC said

Research has found the new coronavirus can last up to three days on plastic and metal surfaces and on cardboard for 24 hours. However, there are a lot of things that need to happen for a person to contract Covid-19 from touching a contaminated surface.

First, a person must come in contact with enough of the virus to actually cause an infection. For example, to be infected with the influenza virus, millions of copies of the virus need to reach a person's face from a surface, but only a few thousand copies are needed when the virus goes directly into the lungs, the New York Times reports.

If a person happens to touch a surface with large traces of the virus, they'd have to pick up enough of the virus and then touch their eyes, nose, or mouth—which is why public health experts say it's so important to avoid touching surfaces too often and constrain from touching contaminated objects or those objects which are frequently touched.

The COVID-19 virus is spreading across the World. Even when it finally does subside, people will have developed a sensitivity about touching things in public. Since most Intercoms are designed to require a button press to make a call, I decided touchless intercom solutions were needed that don't require people to touch anything.

The above image shows a facial recognition based intercom system implemented.

To solve the issue of touch based systems, it is necessary to reduce the places of contact and touch. In traditional system of intercoms, they consist of switch based systems, when pressed rings the bell. To revamp the touch based system which increases the risk of contamination of surfaces, I decided to construct a touch-free facial recognition system deployed on the Arduino 33 BLE Sense, based on tinyML and Tensorflow Lite.

In this smart intercom system, I have used the Person detection algorithm deployed on Arduino 33 BLE Sense which identifies people and accordingly, rings the bell with a LED Matrix display reading out "Person".

Heading towards the implementation of the Smart Intercom System:The following Softwares have been used in designing this model:

- TensorFlow lite

- Arduino Web Editor

In this person detection model, I have used the Pre-trained TensorFlow Person detection model apt for the project. This pre-trained model consists of three classes out of which the third class is with undefined set of data:

"unused",

"person",

"notperson"

In our model we have the Arducam Mini 2mp plus to carry out image intake and this image data with a decent rate of fps is sent to the Arduino Nano 33 BLE Sense for processing and and classification. Since the Microcontroller is capable of providing 256kb RAM, we change the image size of each image to a standard 96*96 for processing and classification. The Arduino Tensorflow Lite network consists of a deep learning framework as:

- Depthwise Conv_2D

- Conv_2D

- AVERAGE Pool_2D

- Flatten layer

This deep learning framework is used to train the Person detection model.

The following is the most important function defined while processing outputs on the Microcontroller via Arduino_detetction_responder.cpp

// Process the inference results.

uint8_t person_score = output->data.uint8[kPersonIndex];

uint8_t no_person_score = output->data.uint8[kNotAPersonIndex];

RespondToDetection(error_reporter, person_score, no_person_score);In the following function defining, the person_score, the no_person_score have been defined on the rate of classification of the data.

The Logic works in the Following way:

├── Autonomous Intercom System

├── Arducam Mini 2mp plus

│ ├── Visual data sent to Arduino

├── Arduino 33 BLE Sense

│ ├── if person-score > no_person_score

│ │ ├── Activate the buzzer

│ │ ├── Display "Person" on the LED Matrix

│ │ └── ...Repeat the loop

Adhering to the above logic, The arducam Mini 2mp plus continuously takes in visual data and sends this data to the Arduino 33 BLE Sense to process and classify the the data collected. Once the raw data is converted to processed data, it is then classified as per the data trained. If a person is detected, the Arduino sends a signal to the buzzer to activate and the MAX7219 to display "person". In this way, the logic of the system works.

Functioning and Working of Logic in Code:

The following are the Libraries included in themain.ino code for functioning of the model.

#include <TensorFlowLite.h>

#include "main_functions.h"

#include "detection_responder.h"

#include "image_provider.h"

#include "model_settings.h"

#include "person_detect_model_data.h"

#include "tensorflow/lite/micro/kernels/micro_ops.h"

#include "tensorflow/lite/micro/micro_error_reporter.h"

#include "tensorflow/lite/micro/micro_interpreter.h"

#include "tensorflow/lite/micro/micro_mutable_op_resolver.h"

#include "tensorflow/lite/schema/schema_generated.h"

#include "tensorflow/lite/version.h"In the following code snippet, the loop is defined and performed. Since this is the main.ino code, it controls the core functioning of the model - used to run the libraries in the model.

void loop() {

// Get image from provider.

if (kTfLiteOk != GetImage(error_reporter, kNumCols, kNumRows, kNumChannels,

input->data.uint8)) {

TF_LITE_REPORT_ERROR(error_reporter, "Image capture failed.");

}

// Run the model on this input and make sure it succeeds.

if (kTfLiteOk != interpreter->Invoke()) {

TF_LITE_REPORT_ERROR(error_reporter, "Invoke failed.");

}

TfLiteTensor* output = interpreter->output(0);

// Process the inference results.

uint8_t person_score = output->data.uint8[kPersonIndex];

uint8_t no_person_score = output->data.uint8[kNotAPersonIndex];

RespondToDetection(error_reporter, person_score, no_person_score);

}In the following code snippet, the necessary libraries required to inference the image to be captured is displayed. The images after captured are converted to a 96*96 standardised size which can be interpreted on the arduino board.

Here, the Arducam mini 2mp OV2640 library has been utilised.

This code has been provided in the arduino_image_provider.cpp snippet

#if defined(ARDUINO) && !defined(ARDUINO_ARDUINO_NANO33BLE)

#define ARDUINO_EXCLUDE_CODE

#endif // defined(ARDUINO) && !defined(ARDUINO_ARDUINO_NANO33BLE)

#ifndef ARDUINO_EXCLUDE_CODE

// Required by Arducam library

#include <SPI.h>

#include <Wire.h>

#include <memorysaver.h>

// Arducam library

#include <ArduCAM.h>

// JPEGDecoder library

#include <JPEGDecoder.h>

// Checks that the Arducam library has been correctly configured

#if !(defined OV2640_MINI_2MP_PLUS)

#error Please select the hardware platform and camera module in the Arduino/libraries/ArduCAM/memorysaver.h

#endif

// The size of our temporary buffer for holding

// JPEG data received from the Arducam module

#define MAX_JPEG_BYTES 4096

// The pin connected to the Arducam Chip Select

#define CS 7

// Camera library instance

ArduCAM myCAM(OV2640, CS);

// Temporary buffer for holding JPEG data from camera

uint8_t jpeg_buffer[MAX_JPEG_BYTES] = {0};

// Length of the JPEG data currently in the buffer

uint32_t jpeg_length = 0;

// Get the camera module ready

TfLiteStatus InitCamera(tflite::ErrorReporter* error_reporter) {

TF_LITE_REPORT_ERROR(error_reporter, "Attempting to start Arducam");

// Enable the Wire library

Wire.begin();

// Configure the CS pin

pinMode(CS, OUTPUT);

digitalWrite(CS, HIGH);

// initialize SPI

SPI.begin();

// Reset the CPLD

myCAM.write_reg(0x07, 0x80);

delay(100);

myCAM.write_reg(0x07, 0x00);

delay(100);

// Test whether we can communicate with Arducam via SPI

myCAM.write_reg(ARDUCHIP_TEST1, 0x55);

uint8_t test;

test = myCAM.read_reg(ARDUCHIP_TEST1);

if (test != 0x55) {

TF_LITE_REPORT_ERROR(error_reporter, "Can't communicate with Arducam");

delay(1000);

return kTfLiteError;

}The following code is the Arduino_detection_responder.cpp code which controls the main output of the model. Here, we have taken into consideration, the classification score as defined in the main.ino code and according to the confidence of person score, I am providing outputs.

// Switch on the green LED when a person is detected,

// the red when no person is detected

if (person_score > no_person_score) {

digitalWrite(LEDG, LOW); // if a person is detected at the door, the buzzer switches on

digitalWrite(LEDR, HIGH); // the led matrix in the house displays "person"

digitalWrite(5, LOW);

myDisplay.setTextAlignment(PA_CENTER);

myDisplay.print("Person");

delay(100);

} else {

digitalWrite(LEDG, HIGH);

digitalWrite(LEDR, LOW);

}

TF_LITE_REPORT_ERROR(error_reporter, "Person score: %d No person score: %d",

person_score, no_person_score);

}

#endif // ARDUINO_EXCLUDE_CODEWorking of the Firmware:

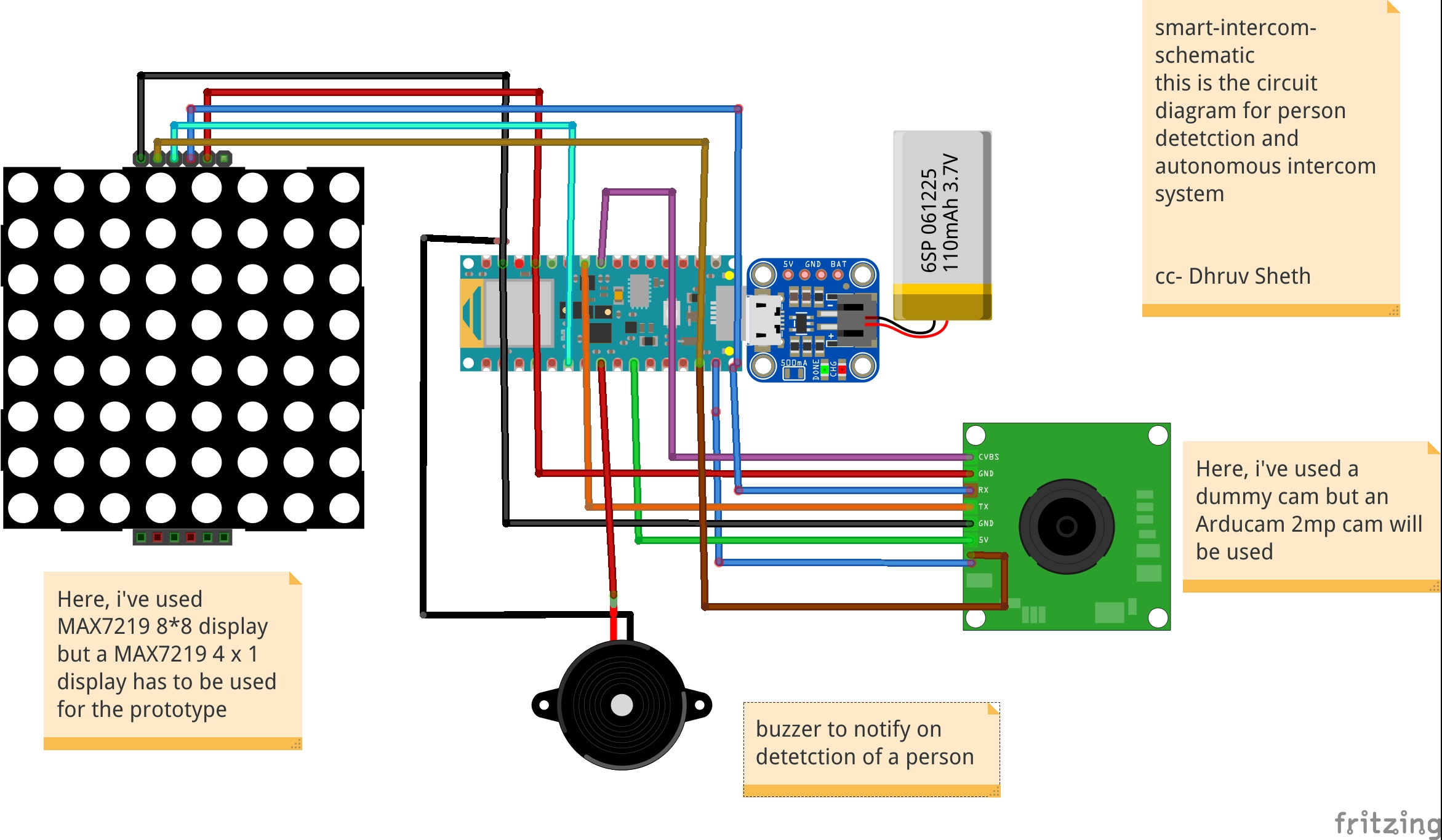

This is the complete setup of the firmware designed on Fritzing.

This simulation shows the capture of data by the Arducam and similarly classification of this data by Arduino 33 BLE Sense

This model comprises of the following firmware used:

- Arduino 33 BLE sense - Used to process the data gathered, classifies the data processes, sends the command according to the logic fed.

- Buzzer - For Alerting when a person is at the door.

- Arducam Mini 2mp plus - Continuous Raw data image accumulation from source.

- Adafruit lithium ion charger - Used to deliver charge through the lithium battery

- Lithium ion Battery - power source

- MAX7219 4 in 1 display - Used for displaying "person" on the display screen.

Additional Features: Using the existing intercom system, it is possible to add a servo to push the button to view the person who is standing at the door as shown in the image:

This can be an additional setup in intercom system to switch on video when a person is detected. However, this additional system has to be deployed on the Existing Intercom system.

Additional code added to the existing code:

// Switch on the green LED when a person is detected,

// the red when no person is detected

if (person_score > no_person_score) {

digitalWrite(LEDG, LOW); // if a person is detected at the door, the buzzer switches on

digitalWrite(LEDR, HIGH); // the led matrix in the house displays "person"

digitalWrite(5, LOW);

myDisplay.setTextAlignment(PA_CENTER);

myDisplay.print("Person");

servo_8.write(0); // this switches on the intercom by rotating servo

delay(500);

servo_8.write(180); // this switches off the intercom by rotating servo

delay(100);

} else {

digitalWrite(LEDG, HIGH);

digitalWrite(LEDR, LOW);I have added an additional function which rotates the servo to switch on the existing intercom and then switches back to its original place.

3rd Project: Autonomous IoT based person temperature sensing automation:The temperature sensor for Arduino is a fundamental element when we want to measure the temperature of a process or of the human body.

The temperature sensor with Arduino must be in contact or close to receive and measure the heat level. That's how thermometers work.

These devices are extremely used to measure the body temperature of sick people, as the temperature is one of the first factors that change in the human body, when there is an abnormality or disease.

One of the diseases that alter the temperature of the human body is COVID 19. Therefore, we present the main symptoms:

- Cough

- Tiredness

- Difficulty breathing (Severe cases)

- Fever

Fever is a symptom whose main characteristic is an increase in body temperature. In this disease, we need to constantly monitor these symptoms.

The retail market has been hit with a great impact due to the pandemic. After the malls and supermarkets have re-begun, it is necessary to ensure safety of all the customers who have entered the premises. For this purpose, manual temperature checking techniques have been set up. This increases the labour and also a risk of contact between the person checking the temperature and the person whose temperature is being check. This is demonstrated as follows:

This image shows the close contact or less social distance maintained between the two persons.

There is a second flaw which is faced in manual temperature checking system:

For the temperatures which have been checked, the data of these recordings is not stored or not synchronised with an external device for monitoring the temperatures measured.

Taking in all these cons into consideration, I've come up with an Arduino based IoT solution deployed on the Arduino MKR WiFi 1010. The temperatures are measured using the Adafruit AMG8833 temperature module. Whenever a person is detected on the gate, the ultrasonic sensors, send the information to the Arduino MKR WiFi 1010 to give a command to the AMG 8833 module to take in temperature data. The module captures the data accurately and the data is projected on an IoT dashboard in real time. If an abnormal temperature reading of a person is detected, an alarm is set on so that the mall security and staff can immediately investigate upon the matter. The data collected is each given a timestamp and can be viewed on the ThingSpeak dashboard the temperature recording vs Time graph for each data.

Similarly, it can be also traced on which days and which time range, does the supermarket or the mall show abnormal temperature readings and more security measures can be implemented accordingly.

The below image shows wherethe setup can be embedded(The area where the setup can be installed)

On the entry checking gate, HC-SR04 Ultrasonic Sensors can be installed which detects the entry of a person and sends the command to the Arduino MKR WiFi 1010 if the person is detected in a 20cm range. The Arduino microcontroller passes on the same command to the AMG 8833 temperature module to read the temperature of the person. This complete process takes time from the Ultrasonic Sensor detecting the person, to sending the command to the temperature module to detect the temperature. Hence, in order to sync with the delay, the temperature module is attached a bit far way from the Ultrasonic sensor.

Whenever a person walks in, the gate is opened ( Here, in the prototype, we are using the servo to function as a gate, but in further implementation of the project, the servo will be replaced by a heavy duty gate motor and controlled via motor driver, wherein the Arduino will be sending commands ). The temperature reading of the person is taken and this reading is sent to the thingspeak IoT dashboard in real time via the Arduino WiFi 1010. For this, an active wifi connection is required in the mall premises which is usually available in most of the cases. According to Research, the body temperature of a person with fever is approximately 38.1C which is 104F. Hence, if the Temperature module detects a temperature above this threshold, the servo turns 180Degrees and the Buzzer goes on to alarm people about the Person. Similarly, security and other mall staff can reach the area in time to control the situation when such a case happens.

The Logic works in the Following way:

├── PersonTemperature Detection MKR WiFi 1010

├── HC-Sr04 Ultrasonic sensor

│ ├── Person Detection

│ │ ├── If( Person distance = 20) ,send command

├── Arduino

│ ├── if Ultrasonic sensor Sends a command;

│ │ ├── Open the gate - Servo(0)

│ │ ├── Activate the AMG8833 to take readings

│ │ ├── Send the Readings to ThingSpeak Dashboard

│ │ | ├── If Temperature > 38.1C

│ │ │ | ├── Close the gate - servo(180)

│ │ │ │ ├── Activate the Buzzer

│ │ └── ...Repeat the loop

In this way, the complete Logic of the Temperature Monitor Functions.

The circuit Diagram for the Temperature Model is given as follows:

This is the complete Firmware and setup used in the project

This simulation shows the data type captured by the AMG 8833 Thermal Cam and this data is sent to the Arduino MKR WiFi 1010 to transfer commands

This simulation shows the distance captured by ultrasonic Sensor and this command is sent to the Arduino MKR WiFi to activate the AMG 8833 thermal sensor

Instead of a servo based door opening system, I will be implementing a command system using motor driver to automate door sliding opening system as follows:

Setting up the IoT Dashboard:

Using Thingsepak IoT Dashboard Setup:

ThingSpeak™ is an IoT analytics service that allows you to aggregate, visualize, and analyze live data streams in the cloud. ThingSpeak provides instant visualizations of data posted by your devices to ThingSpeak. With the ability to execute MATLAB® code in ThingSpeak, you can perform online analysis and process data as it comes in. ThingSpeak is often used for prototyping and proof-of-concept IoT systems that require analytics.

Since, ThingSpeak was easy to setup and use, I preffered to go with that dashboard. This interface allows the user to share the dashboard the security or staff department in the mall so that they can continuously monitor the people visiting the mall, and similarly impose restrictions at certain times when they feel the temperature risk is higher.

The above image represents the versatility of the thingspeak dashboard and the creative visualisations potrayed.

Logic used in the ThingSpeak IoT data collection process

This image shows the creation of visualisations in different channels. Here, I've created a Temperature vs Time Graph in the visualisation section. The data collected by the AMG8833 sensor will be each allocated a timestamp and will be plotted on the graph to see the time for which each data is captured.

The data collected can be viewed in real time on the Public Dashboard here ; ThingSpeak Temperature Dashboard

Similarly, this plot can be integrated cumulatively to a single Dashboard made open to the visitors of the mall to view the temperature data before entering the mall. If the visitors find an abnormal temperature reading at a particular day, they can prefer not to go to the supermarket or mall at that day to be safe,

The visualisation Integration data chart:

<iframe width="450" height="260" style="border: 1px solid #cccccc;" src="https://thingspeak.com/channels/1121969/charts/1?bgcolor=%23ffffff&color=%23d62020&dynamic=true&results=600×cale=10&type=line"></iframe>This chart can be embedded at a single dashboard with the readings of the charts of other malls and supermarkets in the particular area and hence, a unique visitor can check which mall is performing better than other mall in terms of safety and can prefer going to the mall where safety guidelines are followed and the people entering are not diagnosed with fever. This can help the Government help establish safety and trust within the people along with the re-opening of malls and retail sectors

The code of the following project can be either viewed in Github or in the Arduino Web editor here ; Temperature-Model-code

The logic in the code goes as follows:

#include <Wire.h>

#include <Adafruit_AMG88xx.h>

#include <Servo.h>

#include ESP32_Servo.h

#include <WiFi.h>

#include <WiFiMulti.h>

Servo servo1;

int trigPin = 9;

int echoPin = 8;

long distance;

long duration;

WiFiMulti WiFiMulti;

const char* ssid = "JioFiber-tcz5n"; // Your SSID (Name of your WiFi) - This is a dummy name, enter your wifi ssid here

const char* password = "**********"; //I have not mentioned the password here, while running the cript, you may mention your pwd

const char* host = "api.thingspeak.com/1121969";

String api_key = "8LNG46XKJEJC89FE"; // Your API Key provied by thingspeak

Adafruit_AMG88xx amg;Here, I have defined some of the Libraries and Firmware along with the required setup for the WiFi host and the API-Key for the temperature dashboard on thingspeak

Here's an image of the Code in progress:

The next part of the code is Setting up the required components before the actual loop begins:

These are the prerequisites before looping the actual code. I have defined the Servo pin and the Ultrasonic sensors while also begun the testing of the AMG 8833 to see if it can read data or if its connected

void setup()

{

Serial.begin(9600);

servo1.attach(7);

pinMode(trigPin, OUTPUT);

pinMode(echoPin, INPUT);// put your setup code here, to run once:

}

{

Connect_to_Wifi();

Serial.println(F("AMG88xx test"));

bool status;

// default settings

status = amg.begin();

if (!status) {

Serial.println("Could not find a valid AMG88xx sensor, check wiring!");

while (1);

}

Serial.println("-- Thermistor Test --");

Serial.println();

delay(100); // let sensor boot up

}The next part is the Void Loop where the complete function is carried out. Here, Initially I have set the gate to open to allow the entry of each person. The AMG 8833 performs data collection and reads the temperature of the people coming inside. If the temperature is higher than the expected threshold, the gate is closed and an alarm(buzzer) is set on to alert people and not allow the entry of the person

void loop()

ultra();

servo1.write(0);

if(distance <= 20){

Serial.print("Thermistor Temperature = ");

Serial.print(amg.readThermistor());

Serial.println(" *C");

Serial.println();

// call function to send data to Thingspeak

Send_Data();

//delay

delay(50);

if(amg.readThermistor() > 38.1) // if a person with fever is detetcted, he is not allowed to enter

// a person with fever has an avg body temperature of 38.1degree celsius

servo1.write(180);

digitalWrite(6, HIGH); //Turns on the buzzer to alarm people

}The last part is sending the data to the ThingSpeak Dashboard:

Here, since I have only one field in the channel, all the data will be sent to that field. The captured, amg.readThermistor() data is sent to the dashboard.

void Send_Data()

{

Serial.println("Prepare to send data");

// Use WiFiClient class to create TCP connections

WiFiClient client;

const int httpPort = 80;

if (!client.connect(host, httpPort)) {

Serial.println("connection failed");

return;

}

else

{

String data_to_send = api_key;

data_to_send += "&field1=";

data_to_send += string(amg.readThermistor());

data_to_send += "\r\n\";

client.print("POST /update HTTP/1.1\n");

client.print("Host: api.thingspeak.com\n");

client.print("Connection: close\n");

client.print("X-THINGSPEAKAPIKEY: " + api_key + "\n");

client.print("Content-Type: application/x-www-form-urlencoded\n");

client.print("Content-Length: ");

client.print(data_to_send.length());

client.print("\n\n");

client.print(data_to_send);

delay(200); // reduced delay to perform real time data collection

}

client.stop();

}This ends the code section of the project and we move on to explanation of the use and GO TO MARKET part of the project

The above image shows the implementation of the methodology and model in supermarkets and malls.

GO TO MARKET & PRACTICALITY:- Malls and Supermarkets can use this to identify Abnormal Temperature data and this data can be observed even for a certain day at a certain point of time.

- Implement Strategies using this data to ensure Safety and Compliance.

- Decrease Labour and automate Temperature Monitoring Process

- Offer Dashboard to the visitors to monitor if the mall is safe and and accordingly visit the mall at the safest point of time.

- This product can be used to ensure the visitors that the mall is a safe place and hence, can increase the sales and visits following Government guidelines

- Companies offering IoT based solutions can invest in this product for mass production and distribution.

- The more the supermarkets using this product, the more the access to data to the government and more the choice to customers to select the preferable safest place in their locality.

- Comparatively affordable solution as compared to manual temperature monitoring as it decreases the labour cost + decreases the rate of infection when compared to manual monitoring where the person taking temperature has to be close to the visitor to capture the temperature.

Just as the mall and the retail sector has opened, queue management in malls and supermarkets has become a big problem. Due to the pandemic, restricting only a certain amount of people to go inside the mall has has to be followed by the malls to ensure safety compliance. But this is done manually which increases labour work. Also the data for the number of people inside the mall at a given point of time is not available to the visitors of the mall. If this data would have been made available to the the visitors of the mall, it would increase the percentage of people visiting the mall.

Trying to run a shop or a service during the ongoing Corona crisis is certainly a challenge. Serving customers while keeping them and employees safe is tricky, but digital queuing can help a lot in this regard. The technologies behind virtual queues are not entirely new; the call for social distancing just highlights some of the many benefits they offer.

Most countries have introduced legal measures to combat the spread of COVID-19. To ensure customer satisfaction whilst adhering to the new regulations, one thing is for sure: long queues and crowded lobbies need to go. Digital queuing (also referred to as virtual or remote queuing) technology allows businesses to serve their customers in a timely manner while they stay out of harm’s way.

Generally speaking, you will likely find one or more of the following types of queue management solutions in a given retail environment:

- Structured queues: Lines form in a fixed, predetermined position. Examples are supermarket checkouts or airport security queues.

- Unstructured queues: Lines form naturally and spontaneously in varying locations and directions. Examples include taxi queues and waiting for consultants in specialist retail stores.

- Kiosk-based queues: Arriving customers enter basic information into a kiosk, allowing staff to respond accordingly. Kiosks are often used in banks, as well as medical and governmental facilities.

- Mobile queues: Rather than queuing up physically, customers use their smartphones. They do not have to wait in the store but rather can monitor the IoT Dashboard to see wait time at the store.

Long queues, whether they are structured or unstructured, often deter walk-in customers from entering the store. Additionally, they limit productivity and cause excess stress levels for customers and staff.

Does effective queue management directly affect the customer experience?There is an interesting aspect about the experience of waiting in line: The waiting times we perceive often do not correspond with the actual times we spent in line. We may attribute a period of time falsely to be “longer” than normal or deem another period “shorter” despite it actually exceeding the average waiting time. For the most part, this has to do with how we can bridge the time waiting.

“Occupied time (walking to baggage claim) feels shorter than unoccupied time (standing at the carousel). Research on queuing has shown that, on average, people overestimate how long they’ve waited in a line by about 36 percent.”

The main reason that a customer is afraid to visit any supermarket is the problem of insufficient data. Visitors do not know the density of people inside the mall. The higher the number of people inside the mall, the higher is the risk of visiting the mall. Manually calculating the number of people who go enter and exit the mall and updating this data in real time is not possible. Also, a vistor does not know which day and at which time is is best suited to visit the mall. The visitor also does not know the wait time of each mall so that he can go with other supermarkets nearby if the wait time over there is less. As a result, all this leads to less conversion of people and accordingly less number of people visiting the mall. If the visitors have the access to population data, they have a sense of trust and leads to increase in sales of malls. Hence, I've come up with an Arduino Based TinyML and IoT solution to make this data available to the visitors and also increase the conversion of visitors in the mall by following the necessary safety guidelines.If the visitors have the access to population data, they have a sense of trust and leads to increase in sales of malls. Hence, I've come up with an Arduino Based TinyML and IoT solution to make this data available to the visitors and also increase the conversion of visitors in the mall by following the necess

This solution is based on computer vision and person detetction algorithm based on tensorflow framework.

It functions in the following way:

This solution is implemented on the gates of the mall or the supermarket. The Arducam mini 2mp keeps capturing image data and sends it to the Arduino 33 BLE Sense to process and classify this data. If a person is detected, The arduino increases the count of the stored data of number of people inside the mall by 1. Since a person is detected, the Servo motor rotates, opening the entrance to allow the person inside. For each person allowed inside, the data is sent to the ThingSpeak IoT dashboard which is open for the vistors to view.

When the person count increases the 50 threshold limit(This threshold can be altered depending upon the the supermarket size), the gate is closed and a wait time of 15min is set until the customers inside exit the store. The wait time is then displayed on the LED matrix display screen so that the customers in the queue can know the duration they have to wait for.

The people can also keep a track of the number of people inside the store. The number of people allowed to enter inside the store at a single time is 50 people.

The physical queuing unit of this product, helps in establishing queues while the IoT Dashboard helps in projecting the total count of the customers going in the store and total count of the customers going out of the store. Currently this is the dashboard data that will be displayed but I am working on a logic for displaying the waiting time required for the customer to get inside the mall. The logic for it is pretty simple, it depends on the number of people inside the mall. The total no of people entering the mall displayed on dashboard minus the total no of people exited the mall displayed on the dashboard. This will give us an outcome of the total no of people inside the store. The next operation would be the total no of people outside the store subtracted by the threshold (limit that the store can accommodate). If the outcome is a negative integer, the waiting time would be multiplication of the negative integer by the negative of the average time a person spends inside the store. If the outcome of the operation would be a positive integer, the wait time would be none.

Heading towards the implementation of the physical queuing system:The following Softwares have been used in designing this model:

- TensorFlow lite

- ThingSpeak

- Arduino Web Editor

In this person detection model, I have used the Pre-trained TensorFlow Person detection model apt for the project. This pre-trained model consists of three classes out of which the third class is with undefined set of data:

"unused",

"person",

"notperson"

In our model we have the Arducam Mini 2mp plus to carry out image intake and this image data with a decent rate of fps is sent to the Arduino Nano 33 BLE Sense for processing and and classification. Since the Microcontroller is capable of providing 256kb RAM, we change the image size of each image to a standard 96*96 for processing and classification. The Arduino Tensorflow Lite network consists of a deep learning framework as:

- Depthwise Conv_2D

- Conv_2D

- AVERAGE Pool_2D

- Flatten layer

This deep learning framework is used to train the Person detection model.

The following is the most important function defined while processing outputs on the Microcontroller via Arduino_detetction_responder.cpp

// Process the inference results.

uint8_t person_score = output->data.uint8[kPersonIndex];

uint8_t no_person_score = output->data.uint8[kNotAPersonIndex];

RespondToDetection(error_reporter, person_score, no_person_score);In the following function defining, the person_score, the no_person_score have been defined on the rate of classification of the data.

using these defined functions, I will be using it to give certain outputs on the basis of confidence of the person-score and the no_person_score.

The detection responder logic of the code works in the following way:

├── Person Detection and responder - Entry

├── Arducam mini 2MP Plus

│ ├──Image and Video Data to Arduino

├── Arduino BLE 33 Sense

│ ├── processing and classification of the input data

│ │ ├── If person detected, open the gate - servo(180)

│ │ ├── If no person detected, close the gate - servo(0)

│ │ ├── Send the number of people entered count to to ThingSpeak Dashboard via ESP8266 -01

│ │ | ├── If people count has exceeded 50

│ │ │ | ├── Close the gate & wait for 15min to let the people inside move out

│ │ │ │ ├── Display wait time on a LED Matrix

│ │ └── ...Repeat the loop

├── Person Detection and responder - Exit

├── Arducam mini 2MP Plus

│ ├──Image and Video Data to Arduino

├── Arduino BLE 33 Sense

│ ├── processing and classification of the input data

│ │ ├── If person detected, open the gate - servo(180)

│ │ ├── If no person detected, close the gate - servo(0)

│ │ ├── Send the number of people entered count to to ThingSpeak Dashboard via ESP8266 -01

│ │ └── ...Repeat the loop

Adhering to the logic used in the model, the Arducam mini 2mp plus will continuously capture Image data and sends this data to the Arduino 33 BLE Sense to process and classify the data. The overall model size is 125KB. If a person has been detected, the Arduino sends the command to the servo to rotate to servo to 180degree. If a person is not detected, the servo is rotated to 0degree and the gate is closed. Each time a person is detected, the the count increments by 1. If the count exceeds the 50 threshold, no more person is allowed inside and a wait time of 15min is set.

The wait time is continuously displayed and updated on the LED Matrix dislplay.

This count is also displayed on the ThingSpeak IoT dashboard via the ESP8266 01

Through the Dashboard, an individual can easily view the number of people are inside at a given day at a given point of time.

At the exit gate, the same logic is set. If a person is detected, the gate is opened while if no person is detected, the gate is closed. Each time a person is detected, the count increases by 1. This count is displayed on the ThingSpeak IoT dashboard.

In this way one can monitor the number of people entering and the number of people exiting.

Since the two models for entry and exit are deployed on two different microcontrollers, calculating the average wait time based on data from different microcontroller is a bit hard, but this uses a simple logic function.

x = No of people who have entered

Y = No of people who have exited

X - Y = No of people who are inside the mall

Z = Threshold of the no of people who are allowed to be inside the mall

let Z-(X-Y) = count {this is the number of people (in negative) who have either crossed the threshold limit or are below the threshold limit

If "count" is negative, The wait time is equal to count*(the negative of the average time a person spends inside the mall)

if "count" is positive, the wait time is zero

In this way, the average queue time calculating algorithm is imposed.

Working of the Firmware:

This is the complete setup of the firmware designed on Fritzing.

This model comprises of the following firmware used:

- Arduino 33 BLE sense - Used to process the data gathered, classifies the data processes, sends the command according to the logic fed.

- MG959 / MG995 Servo - Heavy duty servo( An external power supply may be applied) - To open and close the gates as per microcontroller command.

- Arducam Mini 2mp plus - Continuous Raw data image accumulation from source.

- Adafruit lithium ion charger - Used to deliver charge through the lithium battery

- Lithium ion Battery - power source

- ESP8266 - 01 - Used for sending data to the ThingSpeak dashboard via WiFi network.

- MAX7219 4 in 1 display - Used for displaying the wait time on the display screen.

Functioning and Working of Logic in Code:

The following are the Libraries included in themain.inocode for functioning of the model.

#include <TensorFlowLite.h>

#include "main_functions.h"

#include "detection_responder.h"

#include "image_provider.h"

#include "model_settings.h"

#include "person_detect_model_data.h"

#include "tensorflow/lite/micro/kernels/micro_ops.h"

#include "tensorflow/lite/micro/micro_error_reporter.h"

#include "tensorflow/lite/micro/micro_interpreter.h"

#include "tensorflow/lite/micro/micro_mutable_op_resolver.h"

#include "tensorflow/lite/schema/schema_generated.h"

#include "tensorflow/lite/version.h"In the following code snippet, the loop is defined and performed. Since this is the main.ino code, it controls the core functioning of the model - used to run the libraries in the model.

void loop() {

// Get image from provider.

if (kTfLiteOk != GetImage(error_reporter, kNumCols, kNumRows, kNumChannels,

input->data.uint8)) {

TF_LITE_REPORT_ERROR(error_reporter, "Image capture failed.");

}

// Run the model on this input and make sure it succeeds.

if (kTfLiteOk != interpreter->Invoke()) {

TF_LITE_REPORT_ERROR(error_reporter, "Invoke failed.");

}

TfLiteTensor* output = interpreter->output(0);

// Process the inference results.

uint8_t person_score = output->data.uint8[kPersonIndex];

uint8_t no_person_score = output->data.uint8[kNotAPersonIndex];

RespondToDetection(error_reporter, person_score, no_person_score);

}In the following code snippet, the necessary libraries required to inference the image to be captured is displayed. The images after captured are converted to a 96*96 standardised size which can be interpreted on the arduino board.

Here, the Arducam mini 2mp OV2640 library has been utilised.

This code has been provided in the arduino_image_provider.cpp snippet

#if defined(ARDUINO) && !defined(ARDUINO_ARDUINO_NANO33BLE)

#define ARDUINO_EXCLUDE_CODE

#endif // defined(ARDUINO) && !defined(ARDUINO_ARDUINO_NANO33BLE)

#ifndef ARDUINO_EXCLUDE_CODE

// Required by Arducam library

#include <SPI.h>

#include <Wire.h>

#include <memorysaver.h>

// Arducam library

#include <ArduCAM.h>

// JPEGDecoder library

#include <JPEGDecoder.h>

// Checks that the Arducam library has been correctly configured

#if !(defined OV2640_MINI_2MP_PLUS)

#error Please select the hardware platform and camera module in the Arduino/libraries/ArduCAM/memorysaver.h

#endif

// The size of our temporary buffer for holding

// JPEG data received from the Arducam module

#define MAX_JPEG_BYTES 4096

// The pin connected to the Arducam Chip Select

#define CS 7

// Camera library instance

ArduCAM myCAM(OV2640, CS);

// Temporary buffer for holding JPEG data from camera

uint8_t jpeg_buffer[MAX_JPEG_BYTES] = {0};

// Length of the JPEG data currently in the buffer

uint32_t jpeg_length = 0;

// Get the camera module ready

TfLiteStatus InitCamera(tflite::ErrorReporter* error_reporter) {

TF_LITE_REPORT_ERROR(error_reporter, "Attempting to start Arducam");

// Enable the Wire library

Wire.begin();

// Configure the CS pin

pinMode(CS, OUTPUT);

digitalWrite(CS, HIGH);

// initialize SPI

SPI.begin();

// Reset the CPLD

myCAM.write_reg(0x07, 0x80);

delay(100);

myCAM.write_reg(0x07, 0x00);

delay(100);

// Test whether we can communicate with Arducam via SPI

myCAM.write_reg(ARDUCHIP_TEST1, 0x55);

uint8_t test;

test = myCAM.read_reg(ARDUCHIP_TEST1);

if (test != 0x55) {

TF_LITE_REPORT_ERROR(error_reporter, "Can't communicate with Arducam");

delay(1000);

return kTfLiteError;

}The final part where in the complete model is controlled is the Arduino_detection_responder.cpp.

This is a small code snippet of the entire logic used. When the confidence score of a person is greater than the confidence score of no person, the gate is opened and it is assumed that the person is detected. For this purpose the servo is moved to 0Degree to open the gate. On the detection of a person, the count is incremented by 1 = initially which began at 0. This count indicates the number of people coming inside. The num value of the count is sent to the ThingSpeak IoT dashboard which represents the number of people entering. When the count reaches the value of 50; the gate is closed and a wait time of 15min is imposed on the queue. The gate is closed and wait time is imposed each time 50 people enter. For this a logic of multiples of 50 is set

// Switch on the green LED when a person is detected,

// the red when no person is detected

if (person_score > no_person_score) {

digitalWrite(LEDG, LOW);

digitalWrite(LEDR, HIGH);

servo_8.write(0); // this servo moves to 0degrees in order to open the mall door when a person is detected to ensure no touch entry system

count++

} else {

digitalWrite(LEDG, HIGH);

digitalWrite(LEDR, LOW);

servo_8.write(180); // this servo moves to 180degrees when no person is detected

}

TF_LITE_REPORT_ERROR(error_reporter, "Person score: %d No person score: %d",

person_score, no_person_score);

}

// Now we have let in 50people inside the store, so we set a delay of 15min wait time for others waiting outside to let them in

// Displaying wait time on the screen every 1min

if(count = y*50) // when people are detected in multiples of 50, we instruct it to start displaying wait time to other people to wait until 15 min

myDisplay.setTextAlignment(PA_CENTER);

myDisplay.print("Waiting 15min");

delay(60000);Setting up the ThingSpeak Dashboard:

Since the features in Thingspeak Dashboard are limited, I will not be implementing the Time prediction algorithm right now but I am working on the logic to communicate and write data from the Dashboard to microcontroller to perform the time calculation algorithm.

In the ThingSpeak Dashboard, I have added two fields ; one for entry and the other one for exit.

The co-ordinates for the store or mall for which the queuing system is displayed, is also added in the form of a map.

The data displayed for the first field is gathered through the entry responding logic and the data displayed for the second field is gathered through the exit responding logic.

This is the snip of the two different logics used in the model.

The ThingSpeak Dashboard can be made available to the the staff of the store to check the number of people entering the store in real time and the number of people exiting the store in real time. This data can also be observed to see the analysis of the data at a given day at a a given time to check and impose further restrictions if required if the limit of people in the store exceeds the expected number of people at a given day

This Dashboard can be viewed here: IoT Dashboard

The following represents the field created for this purpose.

Now, a question might arise that for person detection model that this model can be replaced by ultrasonic or infrared sensors. Flaws in Ultrasonic Sensors or Infrared Based Sensors: These sensors are not exactly accurate and for the real time person count display, these sensors may provide wrong readings. Also, these sensors add as additional hardwares while in Go to Market Solutions, the person detection algorithm can be implemented in existing cameras and can reduce hardware cost. The data from these cameras could be sent to the Arduino BLE sense for central Classification and data processing.

- Malls and Supermarkets can use this to identify The count of people entering and exiting the mall in real time.

- Implement Strategies using this data to ensure Safety and Compliance with efficient Queue management algorithms.

- Decrease Labour and automate Queue Management Process

- Offer Dashboard to the visitors to monitor the density of people inside the mall and accordingly visit the mall at the safest point of time.

- This product can be used to ensure the visitors that the mall is a safe place and hence, can increase the sales and visits following Government guidelines

- Companies offering Ai and IoT based solutions can invest for mass production and distribution.

- The more the supermarkets using this product, the more the access to data to the government and more the choice to customers to select the preferable safest place in their locality along with the the queue time required for each store can be monitored. This will lead to a wide range of options of supermarkets in the locality comparing the queue time and safety.

- Comparatively affordable solution as compared to manual queuing system and updating information manually to the Dashboard.

- Utilize real-time CCTV footage to impose Queue management in a mall/shop through person detection in terms of timely trends and spatial analysis of person density in the mall.

- Enable Stores to make better, data-driven decisions that ensure your safety and efficient Queues based on autonomous queuing system.

Github Code: Arduino Autonomous TinyML and IoT based queuing system.

Addition to the Existing Person Detection Algorithm- Mask Detection System:Mask Detection Model based on TinyML:

Dr. Kierstin Kennedy, chief of hospital medicine at the University of Alabama at Birmingham, said, “Masks can protect against any infectious illness that may be spread by droplets. For example, the flu, pertussis (whooping cough), or pneumonia.”

Adding that wearing a cloth mask has benefits beyond slowing the spread of COVID-19, and that source control can reduce the transmission of many other easily spread respiratory infections — the kind that typically render people infectious even before they display symptoms, like influenza.

“Some international reports have noted a lower impact of flu related to the uptake of measures to prevent COVID-19, ”

Until the threat of this pandemic has been neutralized, people should embrace the protection masks allow them to provide to those around them.

After all, it’s not necessarily about you — it’s about everyone you come in contact with.

It’s not at all uncommon to be an asymptomatic carrier of the new coronavirus — which means that even if you have no symptoms at all, you could potentially transmit the virus to someone who could then become gravely ill or even die.

Adhering to this, I decided to increase the necessity of wearing face-masks along with touch-free systems to increase safety in malls and supermarkets. Along with the person detection algorithm, I decided to make a custom face mask detection model which detects face masks and displays this data on the ThingSpeak IoT dashboard to increase awareness among mall staff as well as the visitors coming inside so that they are aware of the time trends when the most number of people are without masks. Through this there is a increases of sense of warning and awareness in people to wear masks. Accordingly, the store staff can keep a monitor on these trends and increase restrictions based on data driven statistics.

Deciding upon the Logic and Dataset of the Model:

This is an overall Logic used in most of face mask detection algorithms. Since, we are deploying this model to an Arduino 33 BLE Sense, the deployment process of this model will vary.

There are two steps involved in constructing the model for Face Mask Detection.

- Training: Here we’ll focus on loading our face mask detection dataset from disk, training a model (using Keras/TensorFlow) on this dataset, and then serializing the face mask detector to disk

- Deployment: Once the face mask detector is trained, we can then move on to loading the mask detector, performing face detection, and then classifying each face as

maskorno_mask

Dataset used in training this model:

The dataset used in this process consists of 3500 images but to reduce the size of the model, and feed in accurate model images, I have used 813 images to increase accuracy of the model by decreasing bulk size. This model is an average 676K in size and utilizes nearly 440.3K Ram. Since this model is the optimized version of the original model, the accuracy of the model is 87.47% as compared to 98.15% in the non-optimized one.

The following Softwares have been used in designing this model:

- TensorFlow lite

- ThingSpeak

- Arduino Web Editor

Heading towards designing the model in EdgeImpulse Studio:

Powerful deep learning models (based on artificial neural networks) are now reaching microcontrollers. Over the past year great strides were made in making deep learning models smaller, faster and runnable on embedded hardware through projects like TensorFlow Lite for Microcontrollers, uTensor and Arm’s CMSIS-NN; but building a quality dataset, extracting the right features, training and deploying these models is can still be complicated.

Using Edge Impulse you can now quickly collect real-world sensor data, train ML models on this data in the cloud, and then deploy the model back to your Arduino device. From there you can integrate the model into your Arduino sketches with a single function call.

Step 1 - Acquisition of Data in the Edge Impulse Studio:

Using the dataset of 3500 images, I filtered these images to the best performing images and finally fed in 813 images totally in the Training Data and 487 images in the testing Data. I labelled these classes as mask and no_mask.

Then, I went ahead creating an impulse design which best suited the Model type. For optimal accuracy its recommended to use a standard image size which is 96*96 and also works the best on the Arduino 33 BLE Sense. Since the input type was images, I went ahead and selected "images" in the processing block. For the transfer learning block, the type recommended for image learning is Transfer Learning (Images) which is a Fine tune a pre-trained image model on your data. with Good performance even with relatively small image datasets.

The next step was saving the parameters based on color depth. Here, I have selected RGB because in the dataset I am using for mask detection, color is also an important feature of classification instead of grayscale. In this page, we can also see the raw features with the processed features of the image

After Feature Generation I obtained the classification graph or the feature explorer where I could see the classes based on their classifications. The blue dots represent mask images and the orange dots represent the no_mask images.

In this feature generation, I obtained a fair classification with a distinct classification plot.

Finally, Moving on to the Transfer Learning Plot:

Here, I set the number of training cycles/epochs as 30 to get the highest accuracy with minimum val_loss. It so happens that if we train the model based on many training cycles, the accuracy graph starts to decrement after a certain number of epochs and the val_loss increases. Therefore I decided to limit the epochs to 30 which proved to be perfect. The learning rate is set to 0.0005 which is the default and proves to be the most appropriate. Here, I have used the MobileNetV2 0.35 (final layer: 16 neurons, 0.1 dropout) model because this model is comparitively lightweight and accurate.

Finally after completing 30 epochs and 10 epochs of best model performance, I got the accuracy heading to 1.00 and the loss nearly 0 which was 0.0011. The following was the ouput during the training process:

Saving best performing model... Converting TensorFlow Lite float32 model... Converting TensorFlow Lite int8 quantized model with float32 input and output...

- Epoch 9/10 21/21 - 5s - loss: 0.0011 - accuracy: 1.0000 - val_loss: 0.0727 - val_accuracy: 0.9755

- Epoch 10/10 21/21 - 5s - loss: 0.0012 - accuracy: 1.0000 - val_loss: 0.0728 - val_accuracy: 0.9755 Finished training

This was the final output which I received after the training:

The accuracy to be 92.6% and the loss to be 0.19.

Finally Using the test data, I tested the accuracy and found it to be 98.15%.

Finally since I had the model ready, I deployed it as an Arduino Library with the firmware to be the Arduino 33 BLE Sense: I got the zip folder of the library ready and started to make changes as per our requirements.

For a last confirmation, I live classified the data to ensure that the data is classified properly. I got the perfect results as expected:

Changing the code as per the output required in the model:

Here is a snippet of the main.ino code of the mask_detection model

I have defined the arduino libraries required for the functioning of the model and based on the Tensorflow lite framework, I have designed this model.

This is the loop of some of the main functions in the model which are defined in the libraries. The main.ino code centralises these functions and accordingly loops them with a central code.

void loop() {

// Get image from provider.

if (kTfLiteOk != GetImage(error_reporter, kNumCols, kNumRows, kNumChannels,

input->data.uint8)) {

TF_LITE_REPORT_ERROR(error_reporter, "Image capture failed.");

}

// Run the model on this input and make sure it succeeds.

if (kTfLiteOk != interpreter->Invoke()) {

TF_LITE_REPORT_ERROR(error_reporter, "Invoke failed.");

}

TfLiteTensor* output = interpreter->output(0);

// Process the inference results.

uint8_t mask_score = output->data.uint8[kmaskIndex];

uint8_t no_mask_score = output->data.uint8[kno-maskIndex];

RespondToDetection(error_reporter, mask_score, no_mask_score);

}The processed data of this model can be viewed here : (This file is relatively large and varies as per the dataset size of the model) Arduino_mask_detect_model_data.h

For providing image, I will be using the Arducam mini 2mp plus for visual data input. A snippet from the image_provider.h file is :

#include "image_provider.h"

/*

* The sample requires the following third-party libraries to be installed and

* configured:

*

* Arducam

* -------

* 1. Download https://github.com/ArduCAM/Arduino and copy its `ArduCAM`

* subdirectory into `Arduino/libraries`. Commit #e216049 has been tested

* with this code.

* 2. Edit `Arduino/libraries/ArduCAM/memorysaver.h` and ensure that

* "#define OV2640_MINI_2MP_PLUS" is not commented out. Ensure all other

* defines in the same section are commented out.

*

* JPEGDecoder

* -----------

* 1. Install "JPEGDecoder" 1.8.0 from the Arduino library manager.

* 2. Edit "Arduino/Libraries/JPEGDecoder/src/User_Config.h" and comment out

* "#define LOAD_SD_LIBRARY" and "#define LOAD_SDFAT_LIBRARY".

*/

#if defined(ARDUINO) && !defined(ARDUINO_ARDUINO_NANO33BLE)

#define ARDUINO_EXCLUDE_CODE

#endif // defined(ARDUINO) && !defined(ARDUINO_ARDUINO_NANO33BLE)

#ifndef ARDUINO_EXCLUDE_CODE

// Required by Arducam library

#include <SPI.h>

#include <Wire.h>

#include <memorysaver.h>

// Arducam library

#include <ArduCAM.h>

// JPEGDecoder library

#include <JPEGDecoder.h>

// Checks that the Arducam library has been correctly configured

#if !(defined OV2640_MINI_2MP_PLUS)

#error Please select the hardware platform and camera module in the Arduino/libraries/ArduCAM/memorysaver.h

#endifThe Arduino_detection_responder.cpp code performs the inference and delivers the main output required for the code. Here, when a person with a mask is detected, we are opening the gate and if a person with no mask is detected, we are closing the gate.

// Switch on the green LED when a mask is detected,

// the red when no mask is detected

if (mask_score > no_mask_score) {

digitalWrite(LEDG, LOW);

digitalWrite(LEDR, HIGH);

servo_8.write(0); // this servo moves to 0degrees in order to open the mall door when a person is detected to ensure no touch entry system

count++

} else {

digitalWrite(LEDG, HIGH);

digitalWrite(LEDR, LOW);

servo_8.write(180); // this servo moves to 180degrees when no person is detected

count2++

}The count and count2 are variable integers and increment everytime a mask is detected, or not detected. These counts are then displayed onto the ThingSpeak IoT Dashboard as follows:

The graph displays the mask and no mask count over time. This thingspeak dashboard is setup in the Arduino code as seen here:

#include <WiFi.h>

#include <WiFiMulti.h>

WiFiMulti WiFiMulti;

const char* ssid = "Yourssid"; // Your SSID (Name of your WiFi)

const char* password = "Wifipass"; //Your Wifi password

const char* host = "api.thingspeak.com/channels/1118220";

String api_key = "9BRPKINQJJT2WMWP"; // Your API Key provied by thingspeakThe complete code for responder can be viewed here: Arduino_detection_responder.cpp

Github Code can be viewed here: Arduino Mask Detetction

The ThingSpeak dashboard can be viewed here - IoT Dashboard